Analysis, part one: understanding the data

The validations of 2,617 witnesses produced the following data points (as of August 2025):

- 189,441 variants;

- Of these, 100,484 variants were distinct.

The finished validations were extracted from the Google Sheets by Roseingrave into JSON files. Including notes and comments left by our RAs and team members during the validation process, the combined JSON file initially consisted of roughly 1.2 million lines of data. It goes without saying that this is obviously beyond the analytical capabilities of any single person or even a group of people, which is why we employed several computerised approaches to make sense of this data.

Our most important task was to make the computer understand our notation system. Although we were careful to be as consistent and precise as possible, we were well aware that errors and typos may have crept in at various points. We therefore had to develop an approach that not only identified and catalogued all valid variants: the same approach should also be able to identify invalid variants and tell us exactly where these were.

Regular expressions are absolutely essential to this step. A regular expression is ‘a sequence of characters that specifies as a match pattern in text’ (Wikipedia). Having built up a familiarity with our notation system through hundreds of collations and validations, regular expressions were used to tell the computer exactly what a valid variant is and which variant category it belongs to. We essentially created a dictionary for the computer to consult while going through each individual variant in our JSON file.

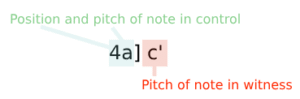

Take our variant 4a] c'. Its constituent parts are the following:

4: the position in the bar;a: the target note in the control;]: the closing square bracket and the non-optional whitespace indicate the separation between the control reading and the witness reading;c': the reading in the witness.

Though our dictionary does not explicitly give the computer the understanding of what this variant is or what it means (we are not training the computer in any way), our script is able to recognise this above variant as belonging to the Pitch changed category.

The Texting Scarlatti dictionary is at the core of two essential analysis scripts which are freely available on our public GitHub (link available soon):

- The variant analyser;

- The NEXUS converter.

The variant analyser

This script analyses variants from our JSON data file. It categorises and counts different types of variants (such as absent bars, extra bars, and specific variant patterns) for each witness, compares variants between witnesses, and writes detailed analysis reports and warnings to output files (one for each sonata). The script supports analysing multiple sonatas at once and provides statistics and comparisons for each sonata. To count and categorise each individual variant across our entire dataset takes about 2.5 seconds. In its first ever run, the variant analyser detected around 4,500 invalid variants, meaning about 97.6% of our data were valid entries. The remaining 2.4% were all corrected by hand. Note that while our dataset now consists of 100% valid entries, that does not necessarily mean they are 100% correct!

The NEXUS converter

This script converts Scarlatti sonata variant data into NEXUS files for phylogenetic analysis. It loads sonata data from a JSON file and, using our dictionary for variant mappings, processes as many sonatas as we ask it to, generating NEXUS files for each of these sonatas. Since this script creates a phylogenetic matrix which can be quite difficult to read for a human, the option exists to create an extensive log of the process so that we can verify exactly what variant is encoded as what character and on which position of the variant matrix. Output files are ready for use with phylogenetic software such as PAUP*, SplitsTree 6, or similar tools. Converting our entire dataset into NEXUS files takes around 3.5 seconds.

Discussion

The benefits of the use of computer scripts to categorise and count variants are clear: no human would be able to process the entirety of the Texting Scarlatti dataset in under 3 seconds! The creation of the variant tables and witness-to-witness comparisons are not the end of the road but rather an invitation to take a closer look at the data. If two witnesses have many variants in common, are these meaningful? Do they indicate possible kinship, or are they coincidences? It still takes expert analysis as well as other evidence to come to potential explanations. What our analysis scripts do is provide the user with a solid starting point. It has taken our contributors over 10,000 hours to create this dataset. It is now up to you, the user, to realise its potential for use in expert musicological research!

In our next Analysis discussion piece we will consider some examples from the Texting Scarlatti dataset and how you might use our dataset for your own research. Of course, adjusting the ‘levers’ in the individual scripts requires some musical knowledge, but we hope our tutorial is clear about what the possibilities are!

Jasper van der Klis, October 2025